In February we attended one of the most important big data events of the year – Strata + Hadoop World. In addition to some great conversations with attendees and customers, SnapLogic Chief Scientist Greg Benson also had the opportunity to talk to big data experts, data scientists and other enterprise IT leaders about the data lake and how SnapLogic comes into play with Hadoop-scale integration. In the presentation he covered:

In February we attended one of the most important big data events of the year – Strata + Hadoop World. In addition to some great conversations with attendees and customers, SnapLogic Chief Scientist Greg Benson also had the opportunity to talk to big data experts, data scientists and other enterprise IT leaders about the data lake and how SnapLogic comes into play with Hadoop-scale integration. In the presentation he covered:

- SnapLogic’s vision of a unified integration platform as a service (iPaaS)

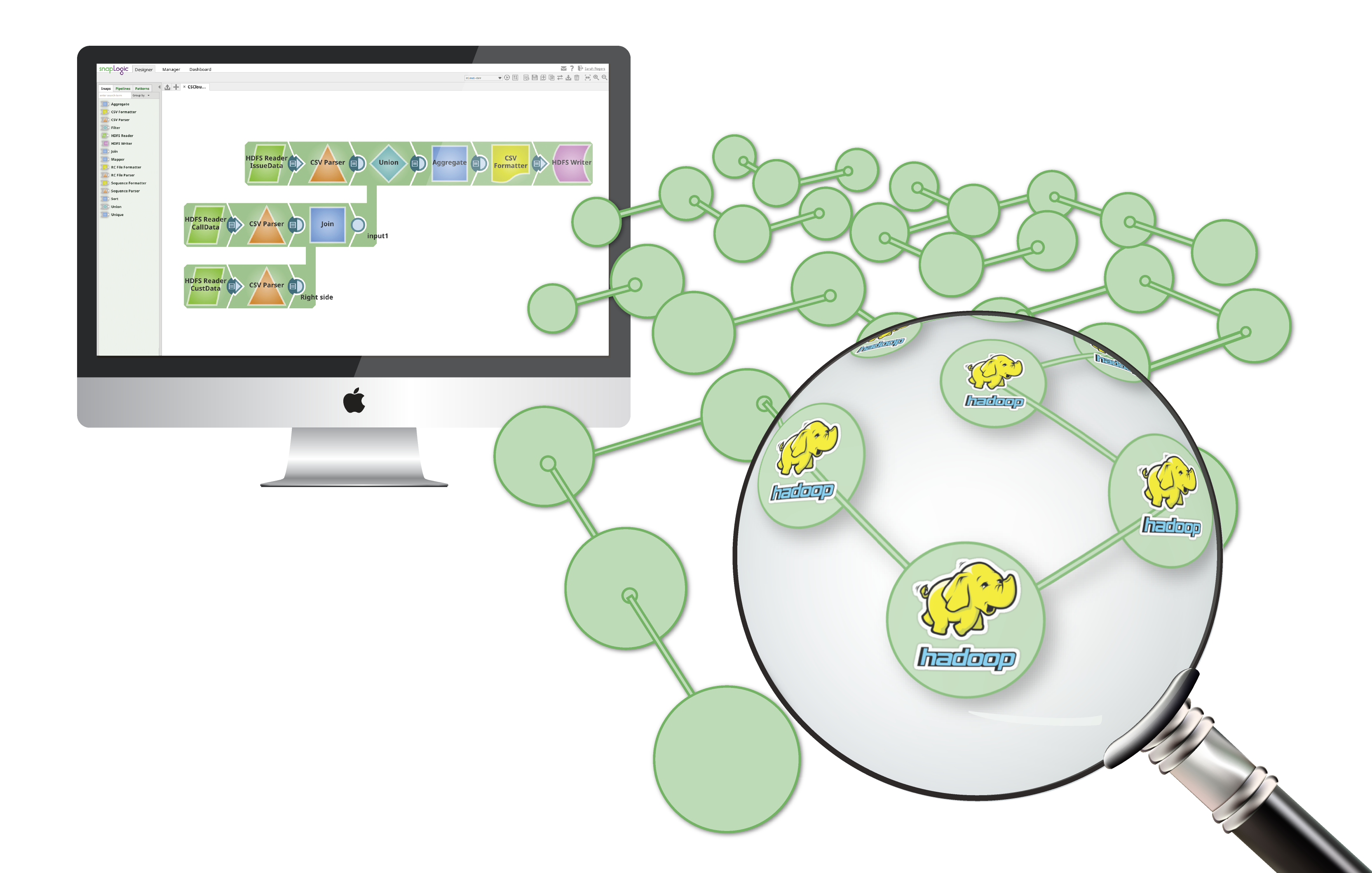

- The SnapLogic Designer for building big data integrations

- SnapLogic key technologies including Hadooplex, SnapReduce and SnapSpark

- Elastic integration at Hadoop-scale

- The data lake as a replacement for the enterprise data warehouse

Check out Greg’s recorded presentation below to learn more about how SnapLogic helps customers adopt Hadoop and automate data integration workflows. The full set of slides can also be found here for reference.

For additional information on the new world of big data – and the changing nature of data integration – take a look at this recent eBook from industry expert and analyst Mark Smith of Ventana Research: Attaining Excellence in Big Data Integration.