The other shoe dropped today. Last year Tibco was taken over by a private equity firm for $4.3 billion. Today it was announced that Informatica will be taken private in a deal worth $5.3 billion.

So in a matter of months, we’ve seen the market impact of the enterprise IT shift away from legacy data management and integration technologies when it comes to dealing with today’s social, mobile, analytics/big data, cloud computing and the Internet of Things (SMACT) applications and data.

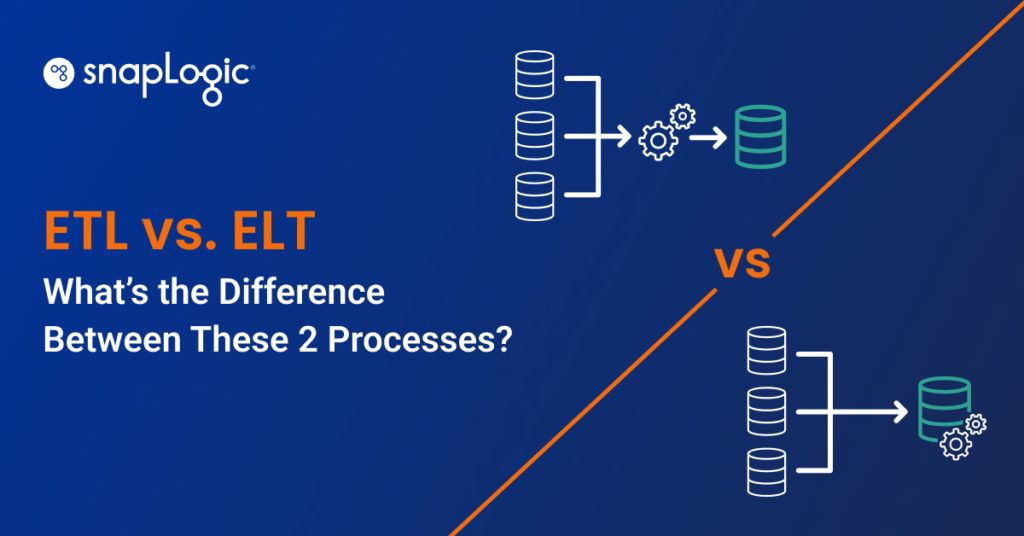

Last year I posted 10 reasons why old ETL and ESB technologies will continue to struggle in the SMACT era. They are:

- Cannibalization of the Core On-Premises Business

- Heritage Matters in the Cloud

- EAI without the ESB

- Beyond ETL

- Point to Point Misses the Point

- Franken-tegration

- Big Data Integration is not Core…or Cloud

- Elastic Scale Out

- An On-Ramp to On-Prem

- Focus and DNA

Last week, SnapLogic’s head of product management wrote the post iPaaS: A new approach to cloud integration. He had this to say about ETL or batch-only data integration:

“ETL is typically used for getting data in and out of a repository (data mart, data warehouse) for analytical purposes, and often addresses data cleansing and quality as well as master data management (MDM) requirements. With the onset of Hadoop to cost-effectively address the collection and storage of structured and unstructured data, however, the relevance of traditional rows-and-columns-centric ETL approaches is now in question.“

Industry analyst and practitioner David Linthicum goes further in his whitepaper: The Death of Traditional Data Integration. The paper covers the rise of services and streaming technologies and when it comes to ETL he notes:

“Traditional ETL tools only focused on data that had to be copied and changed. Emerging data systems approach the use of large amounts of data by largely leaving data in place, and instead accessing and transforming data where it sits, no matter if it maintains a structure or not, and no matter where it’s located, such as in private clouds, public clouds, or traditional systems. JSON, a lightweight data interchange format, is emerging as the common approach that will allow data integration technology to handle tabular, unstructured, and hierarchical data at the same time. As we progress, the role of JSON will become even more strategic to emerging data integration approaches.“

And finally, Gaurav Dhillon, SnapLogic’s co-founder and CEO who also co-founded and ran Informatica for 12+ years, had this to say recently about the old way versus the new way of ensuring enterprise data and applications stay connected:

“We think it’s false reasoning to say, ‘You have to pick an ESB for this, and ETL for that.’ We believe this is really a ‘connective tissue’ problem. You shouldn’t have to change the data load, or use multiple integration platforms, if you are connecting SaaS apps, or if you are connecting an analytics subsystem. It’s just data momentum. You have larger, massive data containers, sometimes moving more slowly into the data lake. In the cloud connection scenario, you have lots of small containers coming in very quickly. The right product should let you do both. That’s where iPaaS comes in.”

At SnapLogic, we’re focused on delivering a unified, productive, modern and connected platform for companies to address their Integrator’s Dilemma and connect faster, because bulldozers and buses aren’t going to fly in the SMACT era.

I’d like to wish all of my former colleagues at Informatica well as they go through the private equity process. (Please note that SnapLogic is hiring.)