Agentic

Integration

Tips and Tricks

Join the agentic integration movement around the world and receive the SnapLogic Blog Newsletter.

Featured

Search Results

-

5 min read

The Identity Problem at the Heart of Enterprise AI

-

3 min read

Why Your AI Agent Needs to Read Before It Writes

-

9 min read

Best iPaaS for Higher Education: A Complete Guide for University Technology Leaders in 2026

-

4 min read

Your AI Agent Has Too Much Access (And You Probably Don’t Know It)

-

8 min read

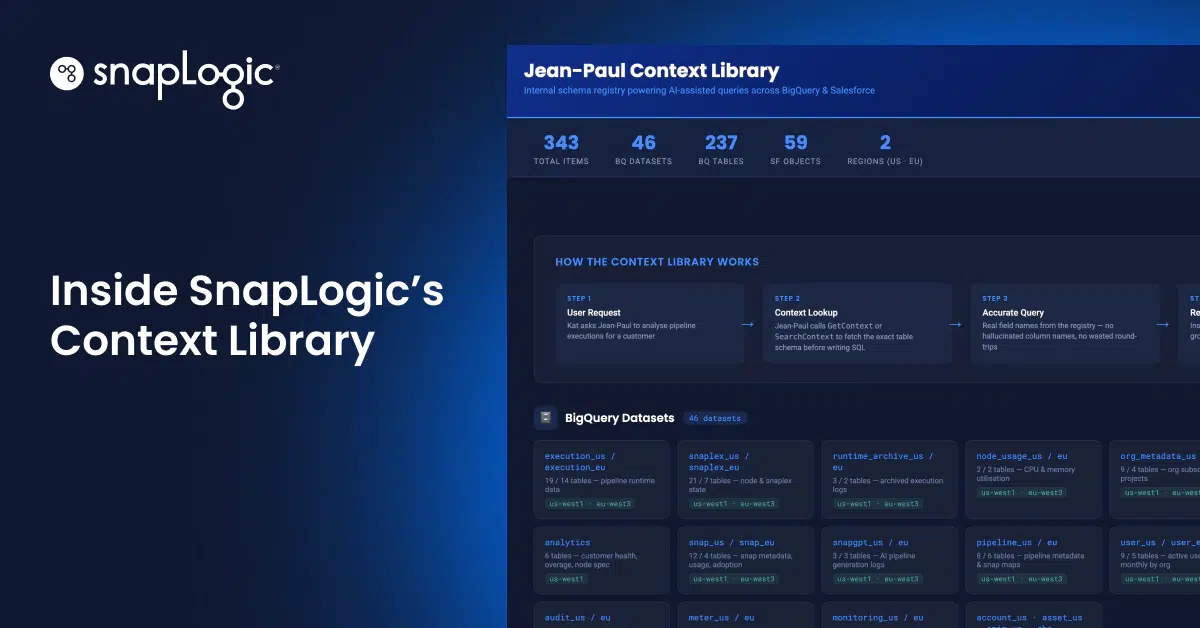

The Context Library That Keeps Enterprise AI Grounded in Live Data

-

6 min read

From AI Experiments to Evidence: SnapLogic at Gartner Data & Analytics London

-

5 min read

What’s New in SnapLogic: May 2026 Product Release

-

10 min read

Best iPaaS for Software and Technology Companies: A Complete Guide for Enterprise Leaders (2026)

-

2 min read

MCP Server Token Propagation

-

7 min read

Your Data Agent Is Only as Smart as Its Context (So We Built One That Gets It Right)

-

4 min read

The Revenue Signal You’re Missing: Why Integration Is the Foundation of Customer Retention

-

6 min read

Gartner’s New Hype Cycle Shows Agents Are About to Explode: Why Integration Is the Real Battleground