Today’s post comes from Joseph A. di Paolantonio, an industry expert working at the convergence of IoT with data management and analytics at DataArchon.com and the Boulder BI Brain Trust. Leveraging a career that started with renewable energy research in graduate school and industry, developing risk assessment models and algorithms for aerospace systems, and managing teams for enterprise data warehousing, BI and data science, Joseph is defining sensor analytics ecosystems to bring value from the IoT.

What’s IOT All About

We have been asking what the IoT is all about for a very long time. Since Kevin Ashton first coined the phrase in 1999, and perhaps even since Nikola Tesla first played with a remote control boat in 1898. For many, the simple act of connecting a device, not a computer, not a router, to the Internet is enough. But even if everything around us, in work, at home, for business, for play, was connected..Does this make the Internet of Things? Does this fulfill much of the hype or any of the stories surrounding the IoT? Not at all.

As we look at the way the IoT is developing, what we actually see is an Internet of a few things, or many Intranets of similar things. We see many verticals arising, such as the Industrial Internet of Things, with even finer grained silos being created within each Industrial vertical. Subsets of the IoT are being created in traditional verticals, such as healthcare, public transportation, self-driving cars, agriculture and mining. And old markets, such as home automation, advanced metering infrastructure and urban planning are bring revitalized through the IoT as smart homes, smart grids and smart cities. But whether one goes back to the Tesla’s experiments with RC, or the first embedded computers of the 1980s, or even the RFID (radio frequency identification) chips in CPG (consumer packaged goods) that led Mr. Ashton to coin the term, it is still early days for the IoT. Make no mistake, the IoT is here, but at varying maturity levels as we advance along a model of Connect, Communicate, Collaborate, Contextualize and Cognition. The common thread among all these differing implementations of IoT concepts is the use of evolving data management and analytics (DMA). This evolution has been accelerating through the concepts of big data, and, more recently, fast data. Another common thread is that this use of advanced DMA coupled with IoT technologies and processes, is that all are seeing similar value propositions:

- Customer Understanding and Retention

- Bottom Line Improvements

- Process Efficiencies

- Predictives for maintenance, recommendations, churn and purchases

All of these and more will be enhanced as the silos in IoT begin to support solution spaces that are uniquely served through the processes and technologies of IoT DMA, and more as these evolve into sensor analytics ecosystems (SAE).

IoT Data Management and Sensor Analytics

The value to be derived from IoT, whether we are talking about all the vertical IoT, or a vast overarching IoT, is derived from data. The main point is that this value can be found in the Cloud, other central points such as the “back office” or a command center, at the Edge, where the sensors and actuators live, and at every point in between. Take transportation as one example. Every sensor and actuator in a vehicle, connected to the Internet, needs to communicate with other sensor packages within the vehicle and at parking spaces, there can be collaboration among vehicles on a common road or area, and context is needed by the vehicle from other data taken from hubs and gateways around a city and then from the cloud – traffic hazards avoidance or driver becoming erratic or parking availability, and we are already seeing examples of cognition in autonomous vehicles and delivery robots. What started in the concepts of big data, is accelerating to amazingly complex interweaving of numerous data flows, both real-time and historic, updating algorithms, prior knowledge and training sets on the fly, as each small data set from each sensor-actuator pair builds over collections of objects and over time. Of course, all of these data sets may not be compatible. We have been dealing with such data incompatibilities since the first data warehouse rolled out. But could you imagine trying to handle IoT scenarios with a 1990s data warehouse project? Not at all. Perhaps standards can help.

Standards are great…There are so many of them

We track over thirty standards bodies, many with hundreds of overlapping and conflicting standards for IoT in general, or in specific verticals, that cover IoT data transport, sensor data packages, semantic protocols and IoT communication protocols. All of these standards can be very confusing. Trying to determine how these standards fit into your data flow and business processes can be daunting. But more importantly, we will look at how application programming interfaces and metadata can help in your efforts to adopt IoT concepts in your organization today. Just as we can’t envisage attempting to solve IoT data management as we would a data warehouse in the 1990s, we need to seek new solutions to handle bringing together IoT data. For example, one sensor, or one IoT platform may package its data as JSON, and another as XML. Replace one sensor that uses JSON with another that uses XML, and in addition to the requirement to parse these different data, there is also the requirement to recognize that the new data represents a continuance of the old data, and that the sensor analytics from this location must continue smoothly. We may be required to ingest the data from MQTT, or CoAP, or streaming SQL in Apache Calcite. Perhaps we will wish to score the data in real-time from machine learning models, as we collect the data. Time-series data, location data and all the various metadata about sensors, actuators, packages, devices, algorithms and specific uses needs to be managed with two-way traceability providing an ongoing data lineage. The tools and the architecture for handling IoT data and to build SAE are very different from the business operations and needs of a decade ago.

Architecture

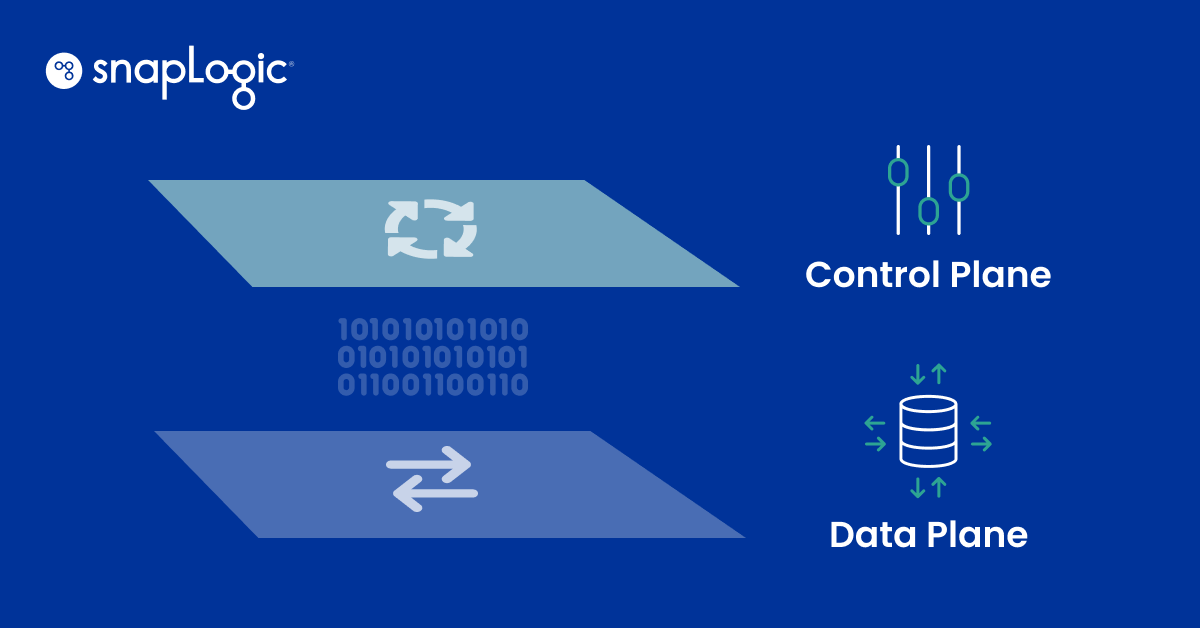

Data architecture can no longer be based on the simple flow of data from in-house transactional systems into data warehouses for reporting and on-line analytics processing (OLAP). For an excellent review of a modern data architecture, with implementation through the data lake concept, take a look at the white paper by Mark Madsen of Third Nature. A data architecture that incorporates IoT data must consider several factors that aren’t standard.

- streaming analytics

- interactions among collection, aggregation and analytics points from sensor package to network edge to gateways to regional centers to cloud and back again

- updates of prior knowledge, training sets and test distributions, and models

- evolving algorithms

- open data

- changing third-party sources

- deriving context from the edge for central OT and IT systems, cloud, on-premises or hybrid

- bringing context to the edge from everywhere else

Takeaways

Throughout the webinar, we will discuss five ways that webinar participants can prepare for IoT in their own organizations.

- Identify instrumented products and services

- Define potential improvements from IoT data

- What data do you currently collect? What data are available that you don’t collect? What data do you need to meet the improvements?

- How will you manage and integrate the new data sets across all collection, storage and use points from the Edge to the Cloud?

- What DMA infrastructure is available or is needed for real-time data ingress, and combining real-time and historical data?