“‘When I use a word,’ Humpty Dumpty said, in rather a scornful tone, ‘it means just what I choose it to mean — neither more nor less.’

‘The question is,’ said Alice, ‘whether you can make words mean so many different things.'”

— Through The Looking Glass, Lewis Caroll

“Big Data”, like most buzzwords, has generated many partially overlapping definitions. (In fact, the author has become of the opinion that just like herds of cows and murders of crows, collections of definitions need their own collective noun. He respectfully submits “opinion”, as in “an opinion of definitions”, as the natural choice.) This post is not about adding another definition of Big Data. It is about considering the operational and architectural implications of calling something Big Data.

So grab your definition(s) of choice and a representative handful of your data, and consider the following:

- Is every piece of my data vital? Would I be upset if a small, random percentage just wandered off and became non-data?

- Do I require the traditional guarantees that relational databases have traditionally provided? (Such as ACID and the rigidity and specificity of connections between data.)

- Are there insights to be gathered from data in the aggregate that can’t be found when analyzing only a subset?

- Is my data unstructured? Generated from IoT devices? Generated by user-activity in applications or web services?

Note the lack of questions about dataset volume, velocity, or variety. These are important considerations, but it’s also useful to think of Big Data as paradigm where you will accept certain tradeoffs that you traditionally would not; alternatively, think of Big Data as an ecosystem where certain solution patterns get used.

For instance, imagine you are processing financial transactions. Your answers to the questions posed in (1) and (2) would be “Yes”, “Extremely”, and “Yes.” This piece of your system probably needs to be a traditional relational database. Why? Well, Big Data architectures are typically tolerant of some data loss. After all, you have lots of data.

While this seems absurd for financial transactions, remember that most Big Data technologies stemmed from ‘Web 2.0’ companies trying to solve problems they were facing. MapReduce (and hence Hadoop) was used by Google to index the Web. Kafka helped LinkedIn process log data. In both cases, the occasional lost datum wasn’t of consequence.

On the other hand, if you answered Yes to the questions of (3) and (4), you probably have data best processed using Big Data technologies. Here you have so much data that no individual piece is particularly important, but the aggregate – and the ability to analyze the aggregate – is. What you need is an easy-to-use way of working with that data.

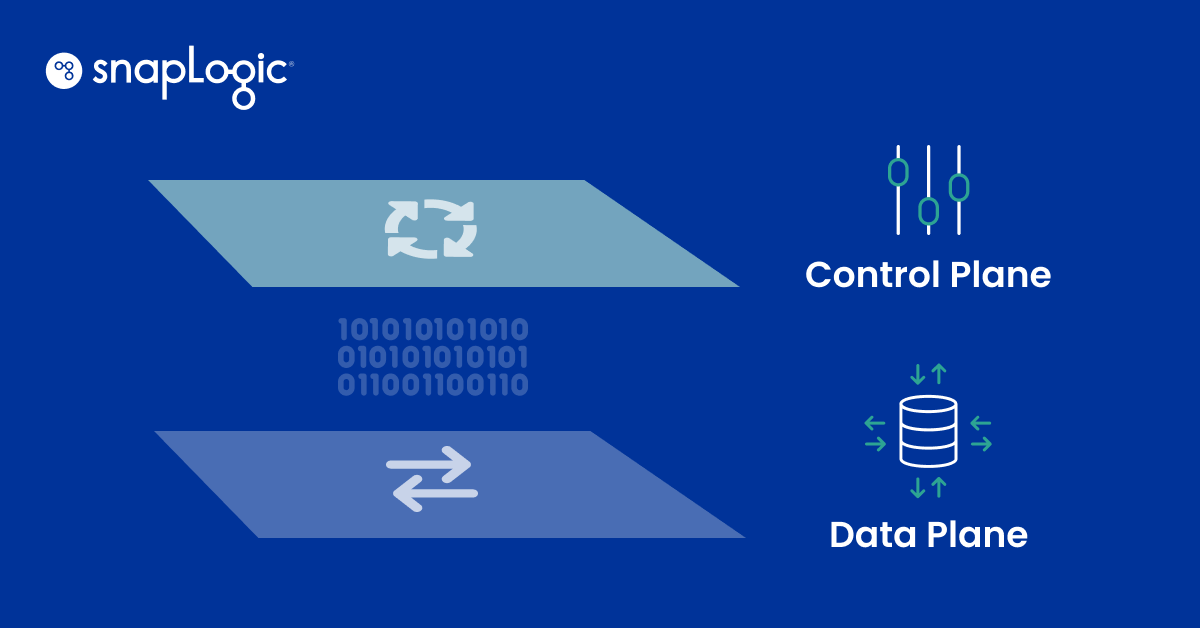

The SnapLogic Elastic Integration Platform handles small data, medium data, Big Data, cloud data, batch data or streaming data – basically, it handles data, regardless of the adjectives you want to apply to it. You don’t have to choose one size for all of your data. The benefit of having a data lake is that each part of your infrastructure can use the solution that works best for its application, yet all the pieces can be connected together seamlessly. We can work with you to deploy an overall architecture that’s right for your needs today, and that can scale to tomorrow’s needs.