Agentic

Integration

Tips and Tricks

Join the agentic integration movement around the world and receive the SnapLogic Blog Newsletter.

Featured

Search Results

-

4 min read

No More Environment Conflicts: How E-Pods Gives Every Developer at SnapLogic Their Own Production Clone

-

4 min read

What Good AI Governance Looks Like Inside a Bank

-

5 min read

From Integration to Execution: Powering the Next Phase of Enterprise AI

-

9 min read

Best iPaaS for Manufacturing: A Complete Guide for Enterprise Leaders (2026)

-

3 min read

SnapLogic Leads the Way in the New Era of AI Agent Platforms

-

4 min read

From Bootcamp to Breakthrough: Building Real-World AI Agents

-

3 min read

Financial Services Data Modernization and AI Readiness: What Leaders Are Discussing Ahead of FIMA

-

4 min read

Breaking the Decision Gap: What Insurance Leaders Told Us Over Breakfast

-

7 min read

SnapLogic vs. MuleSoft: A Modern Integration Platform Comparison (2026)

-

3 min read

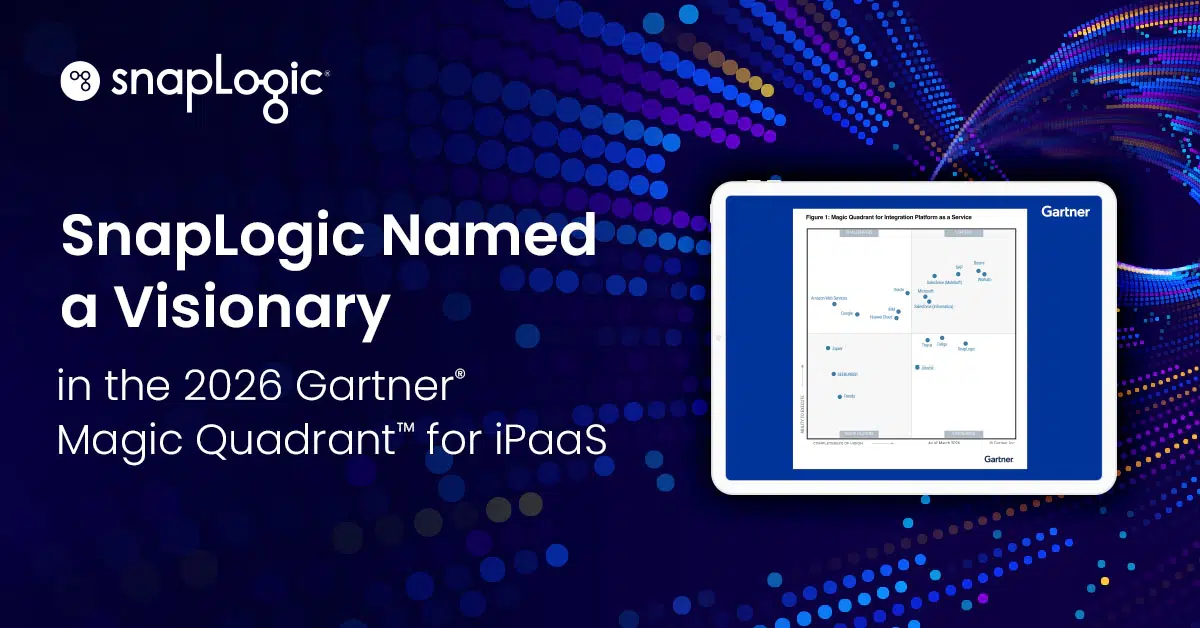

SnapLogic Recognized for Integration That Drives Enterprise Results

-

7 min read

The Enterprise Rollout: Hybrid Execution and the Path to Operational AI

-

5 min read

7 Ways SnapLogic Is Redefining Enterprise Integration with AI and SnapGPT